- #Jupyter notebook file extension how to

- #Jupyter notebook file extension install

- #Jupyter notebook file extension archive

Again we use an import statement approach, ensuring that the libraries in question are made available on the cluster executing the code or within the session in question.

#Jupyter notebook file extension install

#Jupyter notebook file extension how to

Example of how to import python native modules into a Databricks Notebook Details:

#Jupyter notebook file extension archive

If the archive contains a folder, Databricks recreates that folder. However, I don't believe there's currently a way to clone a repo containing a directory of notebooks into a Databricks workspace. Notebook-scoped R libraries enable you to create and modify custom R environments that are specific to a notebook session. Step 2: Create Pandas Dataframe over Import Databricks Notebook to Execute via Data Factory. py The code below can import the python module into a Databricks notebook but doesn’t work when is imported into a python script. In this case, the notebook is unable to import the library. Spark is a unified analytics engine capable of working with virtually every major database, data caching service, and data warehouse provider. Upload a notebook or provide a path to a notebook. Note that some special configurations may need to be adjusted to work in the Databricks environment. org Education Details: Notebook-scoped Python libraries Databricks on AWS. AugCategory : Uncategorized Now the sample notebook that we're going to be working with demonstrates a number of capabilities available in Databricks. Let’s start by viewing our new table: %sql SELECT * FROM covid. Complete the questions - they are pretty straightforward.

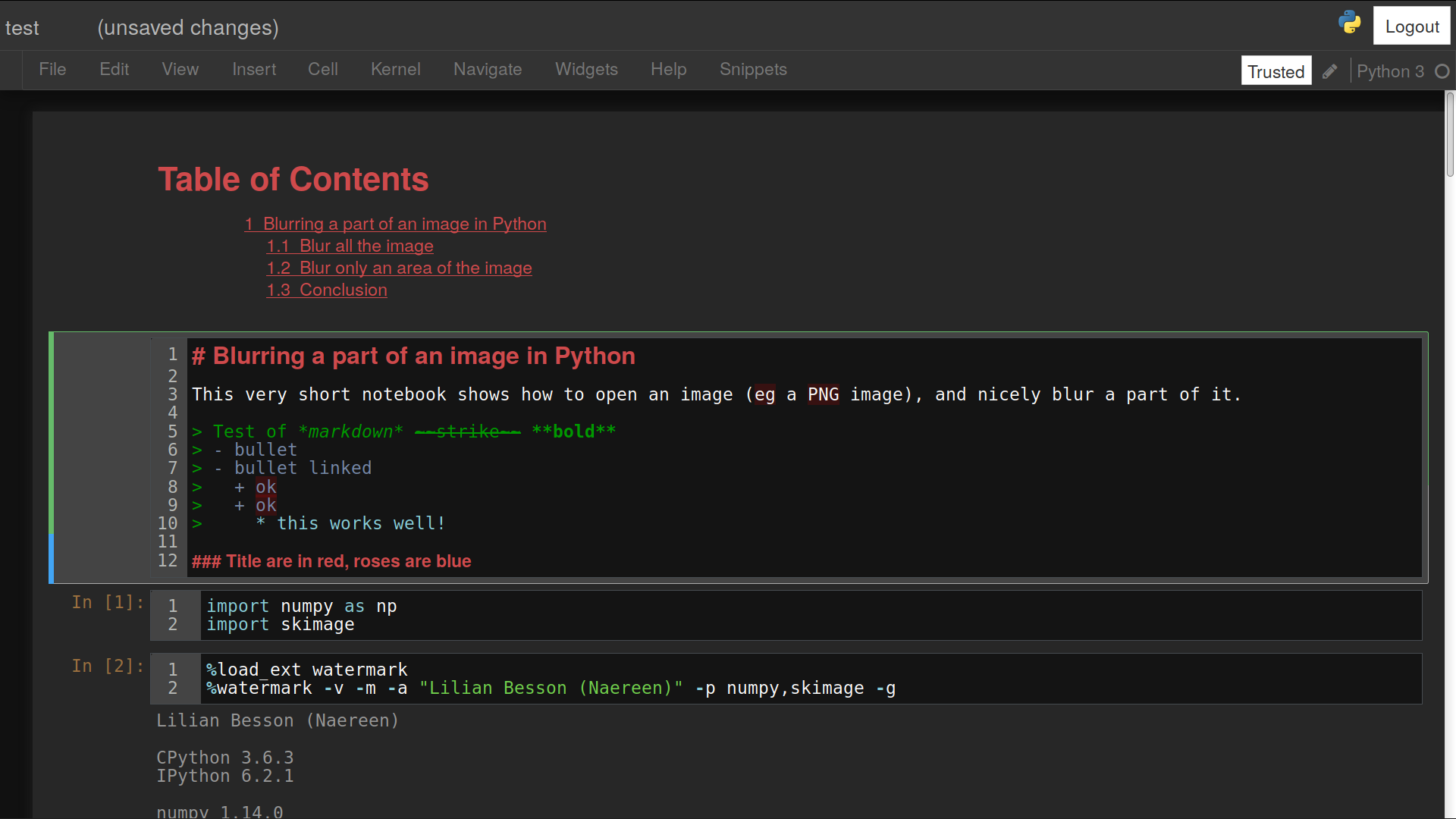

Although been a python notebook, Databricks supports multiple languages inside your notebook. To read complete azure databricks documentation, you can click this link and go through Recents You can import a notebook into the workspace using the tab on the left. Security (users and groups) For all of them an appropriate REST API is provided by Databricks to manage and also exports and imports.

such as creating a library, using BricksFlow, or orchestration in But in DataBricks, as we have notebooks instead of modules, the classical import doesn’t work anymore (at least not yet). A Databricks archive is a JAR file with extra metadata and has the extension. In the Data menu, you can generate a notebook for each option, that demonstrates how to import and convert the data to a table. They are essentially a presentation-friendly view of a Databricks notebook. This is the place where you can import libraries 11 Read Documentation. In the notebook, Python code is used to fit a number of machine learning models on a sample data set. Import these samples directly from your workspace.

Problem Cause Solution Cannot import TabularPrediction from AutoGluon Latest PyStan fails to install on Databricks Runtime 6. A Databricks notebook can by synced to an ADO/Github/Bitbucket repo. Step 2: Create Pandas Dataframe over PyPi Name: azureml-sdk Select Install Library.

You should see a table like this: Notebooks embedded in the docs¶. Databricks import library in notebook get_library_statuses: Get the status of libraries on Databricks clusters get_run_status: Get the status of a job run on Databricks hello: Hello, World! import_to_workspace: Import Code to the Databricks runtimes include many popular libraries.